Every API call starts from zero. The model doesn't remember your last message. It re-reads the entire conversation — system prompt, tool definitions, every message, every tool result — from scratch. Every single time.

That means your context window isn't memory. It's a budget. And you're spending it faster than you think.

Claude advertises 200k tokens. That's roughly 150,000 words. Sounds massive. But output tokens have their own cap — typically 8–64k — and tools like compaction reserve space on top of that. The actual usable window is smaller than 200k before you type a single message.

The dumb zone

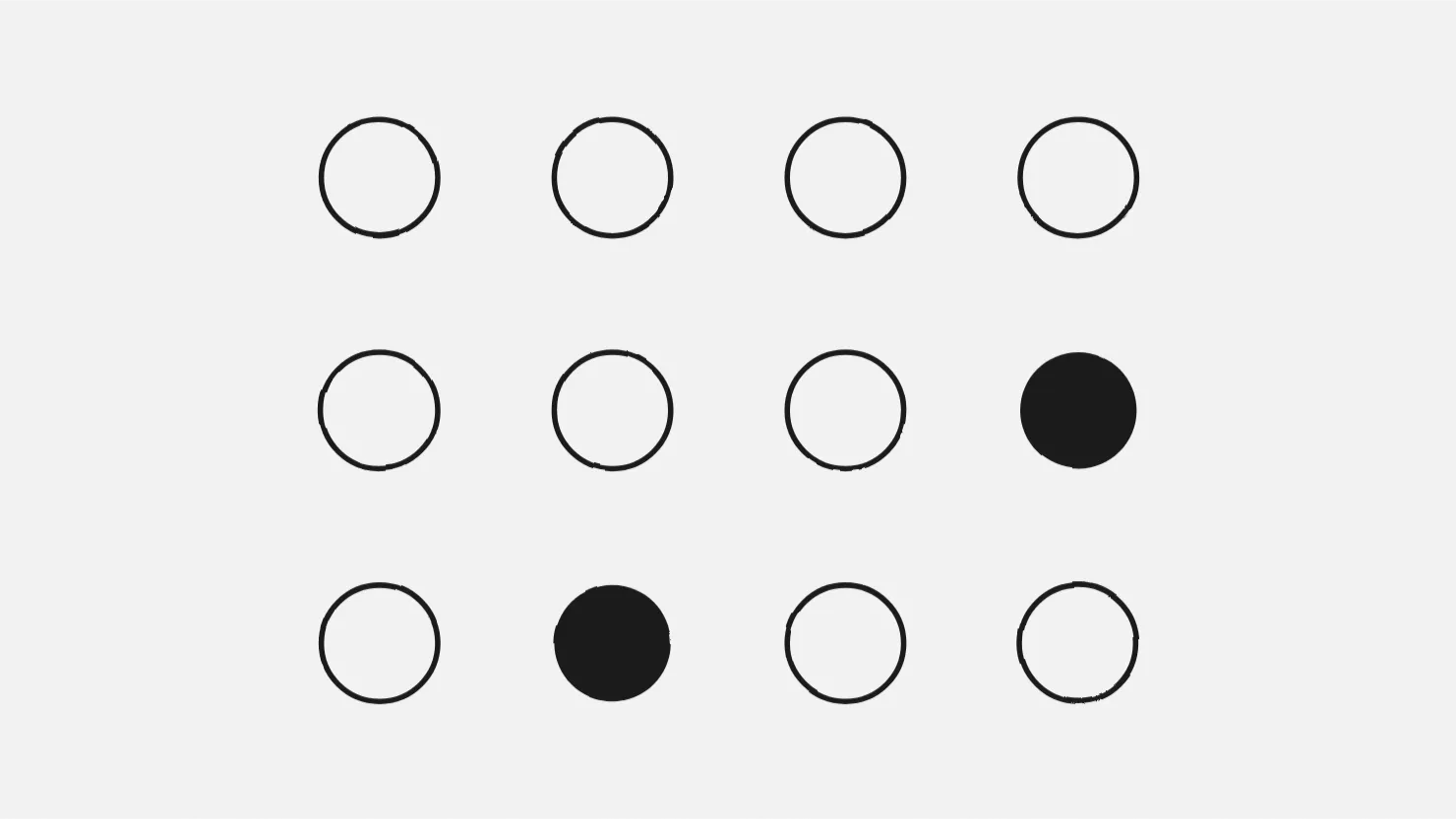

Researchers have consistently found that model performance follows a U-curve across the context window. Information at the beginning and end gets attention. Everything in the middle gets lost. The original Stanford paper called it "lost in the middle" in 2023 (paper) — and Chroma Research confirmed it still holds across 18 current models in 2025. It's not a bug being fixed. It's architectural.

In practice, this means your context window has quality zones. The first 40% or so is clean — the model follows instructions, output is precise. Between 40–70%, it starts cutting corners. Past 70%, you're in what Geoffrey Huntley calls the dumb zone. Instructions get ignored. Hallucinations increase. The model isn't broken. It's drowning in tokens.

A 200k window at 70% is 140k tokens. That sounds like a lot of runway before things go wrong. But system prompts, tool definitions, and MCP server configs eat a chunk before you type a single message. In a coding agent session with a few file reads and tool calls, you can hit 70% faster than you'd expect.

Context poisoning

Here's what actually fills your context: noise. You try an approach, it fails. You try another. The first attempt doesn't disappear — it sits there, confusing the model. Old file contents, abandoned instructions, contradictory guidance from 20 messages ago. The model treats it all as equally valid. It can't tell current intent from stale context.

This is context poisoning. And it compounds — agent success rates measurably drop after about 35 minutes of continuous operation.

Why compaction doesn't save you

When context fills up, tools try to save you by summarizing older messages. Sounds reasonable. But summarizing loses specifics — a file path becomes "the auth module," an exact error becomes "a type error." And compressed noise is still noise.

Worse: compaction keeps you near the ceiling. You compact from 90% down to 70% and you're still in the dumb zone. You never get back to clean.

The fix: /clear often

The counterintuitive move: throw it away. Start fresh. A new context with just your CLAUDE.md and the current task puts you at maybe 5% of capacity — deep in the high-quality zone. Your project instructions reload at the top of the window, exactly where the model pays the most attention.

Clear between tasks. Clear when the agent starts repeating mistakes. Clear when you feel output quality dipping. It's free and it works better than any clever context management trick.

The Ralph Loop

Geoffrey Huntley took this idea to its logical extreme with the Ralph Loop — a bash loop that runs a coding agent repeatedly, each iteration getting a fresh context with the full spec reloaded:

while :; do cat PROMPT.md | claude-code ; doneOne task per iteration. Fresh context every time. The spec files are the durable part — code is disposable, reshaped every iteration. He documented completing a $50,000 contract for $297 in compute costs using this pattern.

It works because it sidesteps every problem above: no dumb zone, no poisoning, no compaction. Just a clean window, a clear spec, and one focused task.

This is context engineering

The discipline of managing what goes into the window and what gets cut. There's more to it: back pressure, spec-driven workflows, sub-agents, tool budgets. But it all starts here — understanding that your context window is smaller than you think, and /clear is your best tool.